■

Robotic drivers that turn existing fleets into autonomous vehicles

DriverAgent is a modernised physical AI driver that upgrades existing commercial vehicles for autonomous operation — giving fleets a faster, lower-cost path to deployment.

What makes Osmosis AI unique

■

Most autonomous vehicle companies depend on purpose-built vehicles, deep OEM integration, or expensive new hardware stacks.

Osmosis AI takes a different path.

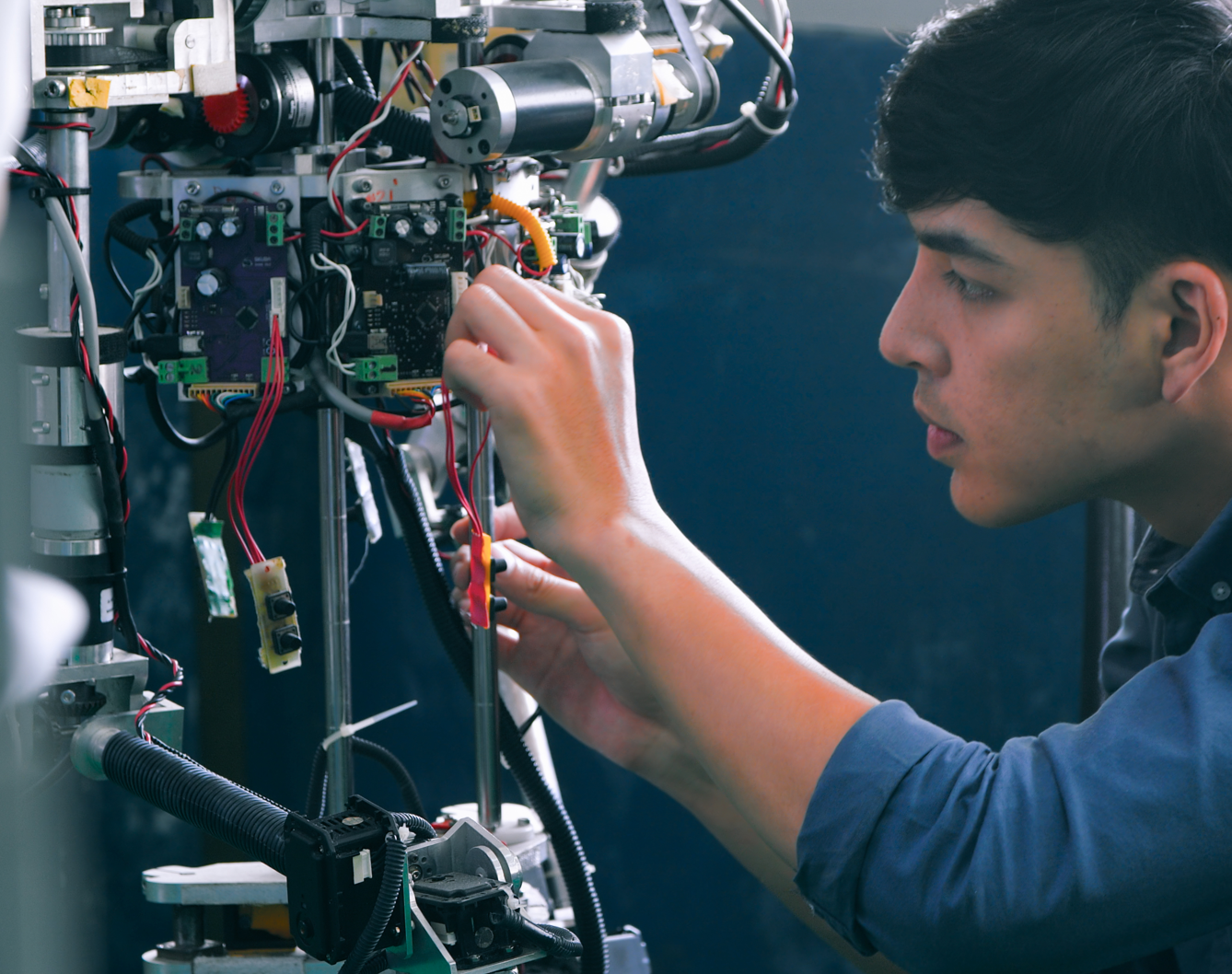

We build a robotic driver that can be installed into existing commercial vehicles and physically operate the car itself. DriverAgent combines robotic actuation, multi-camera perception, and an embodied AI driving stack so the system can observe, reason, and act in the real world.

That means fleets do not need to wait for future vehicle platforms to adopt autonomy. They can start from the vehicles they already own.

Physical AI, not just software — DriverAgent does not only tell a vehicle what to do. It can physically drive it.

Built for existing fleets — A practical upgrade path for commercial vehicles already in operation.

Deployment-first strategy — We start where autonomy is commercially and operationally realistic first.

The problem we solve

Fleet operators are under pressure from driver shortages, rising labour costs, vehicle downtime, and operational inefficiencies in yards, depots, and short-route logistics.

At the same time, most autonomous vehicle solutions are still too expensive, too complex, or too dependent on new vehicle platforms to deploy at a meaningful scale.

So the industry is stuck between two bad options: keep relying on hard-to-staff manual operations, or wait years for fully new autonomous fleets to become affordable.

Osmosis AI closes that gap.

We help fleets upgrade what they already have, automate repeatable operations sooner, and create a more realistic path to autonomy.

How DriverAgent works

DriverAgent combines multi-camera vision, world-model intelligence, and robotic controls to operate a vehicle like a human driver. It sees the environment, understands what is happening around the vehicle, decides the safest next action, and physically controls steering, braking, acceleration, and gear selection.

■

Why world-model

training matters

Training a robot driver to understand what happens next :

Driving is not only about reacting to what is visible in a single frame. A safe driver must understand movement, intention, uncertainty, and what could happen next.

That is why our approach goes beyond simple detection.

We use world-model-driven training to help DriverAgent learn how environments evolve over time — so it can better predict motion, plan safer behaviour, and respond more naturally in dynamic situations.

This helps move autonomous driving closer to how humans operate: seeing the scene, anticipating change, and acting with context instead of only following fixed rules.

Why Upgrade Wins

The world does not need to scrap millions of working vehicles before autonomy can begin.

Osmosis AI is built around the reality of brownfield fleets. Operators already own vehicles, understand their routes, and need solutions that fit existing operations.

By upgrading existing vehicles instead of replacing them, DriverAgent can help reduce adoption cost, shorten deployment timelines, and open a more practical route into automation.

Benefit points

Use existing fleet assets

Reduce the need for expensive vehicle replacement

Start with repeatable and controlled operating domains

Expand capabilities over time with software, data, and validation

Where we start first

Depot operations /Yard movements

Urban mobility

Public Transportation

Middle Miles / Hub-to-hub repeat routes

Safety and deployment mindset

Autonomy is not only a software challenge. It is a full system challenge involving robotics, vehicle control, fleet operations, monitoring, maintenance, and safety evidence.

DriverAgent is being developed with a deployment mindset: controlled testing, operational boundaries, continuous logging, supervised validation, and a staged path from pilot environments toward larger-scale use.

We believe practical autonomy will be won by systems that combine strong AI with real-world operational discipline.

Built with deployment discipline from day one